Systems: Difference between revisions

No edit summary |

No edit summary |

||

| Line 2: | Line 2: | ||

== Irgetas cluster (expected deployment: September 2025) == | == Irgetas cluster (expected deployment: September 2025) == | ||

<div style="display:inline-block; background: white; padding:5px;"> [[File:Irgetas_picture_1.jpg|420x420px]] </div> | |||

<div style="display:inline-block; background: white; padding:5px;"> [[File:Irgetas_picture_2.jpg|420x420px]] </div> | |||

<div style="display:inline-block; background: white; padding:5px;"> [[File:Irgetas_picture_3.jpg|420x420px]] </div> | |||

<div style="display:inline-block; background: white; padding:5px;"> [[File:Irgetas_picture_4.jpg|420x420px]] </div> | |||

<div style="display:inline-block; background: white; padding:5px;"> [[File:Irgetas_picture_5.jpg|420x420px]] </div> | |||

<div style="display:inline-block; background: white; padding:5px;"> [[File:Irgetas_picture_6.jpg|420x420px]] </div> | |||

[[File:Irgetas_picture_1.jpg|420x420px|border]] [[File:Irgetas_picture_2.jpg|420x420px|border]] [[File:Irgetas_picture_3.jpg|420x420px|border]] | [[File:Irgetas_picture_1.jpg|420x420px|border]] [[File:Irgetas_picture_2.jpg|420x420px|border]] [[File:Irgetas_picture_3.jpg|420x420px|border]] | ||

[[File:Irgetas_picture_4.jpg|420x420px|border]] [[File:Irgetas_picture_5.jpg|420x420px|border]] [[File:Irgetas_picture_6.jpg|420x420px|border]] | [[File:Irgetas_picture_4.jpg|420x420px|border]] [[File:Irgetas_picture_5.jpg|420x420px|border]] [[File:Irgetas_picture_6.jpg|420x420px|border]] | ||

Revision as of 01:05, 16 September 2025

Nazarbayev University High Performance Computing team currently operates three main facilities - Irgetas, Shabyt, and Muon. Below we provide a brief overview of them.

Irgetas cluster (expected deployment: September 2025)

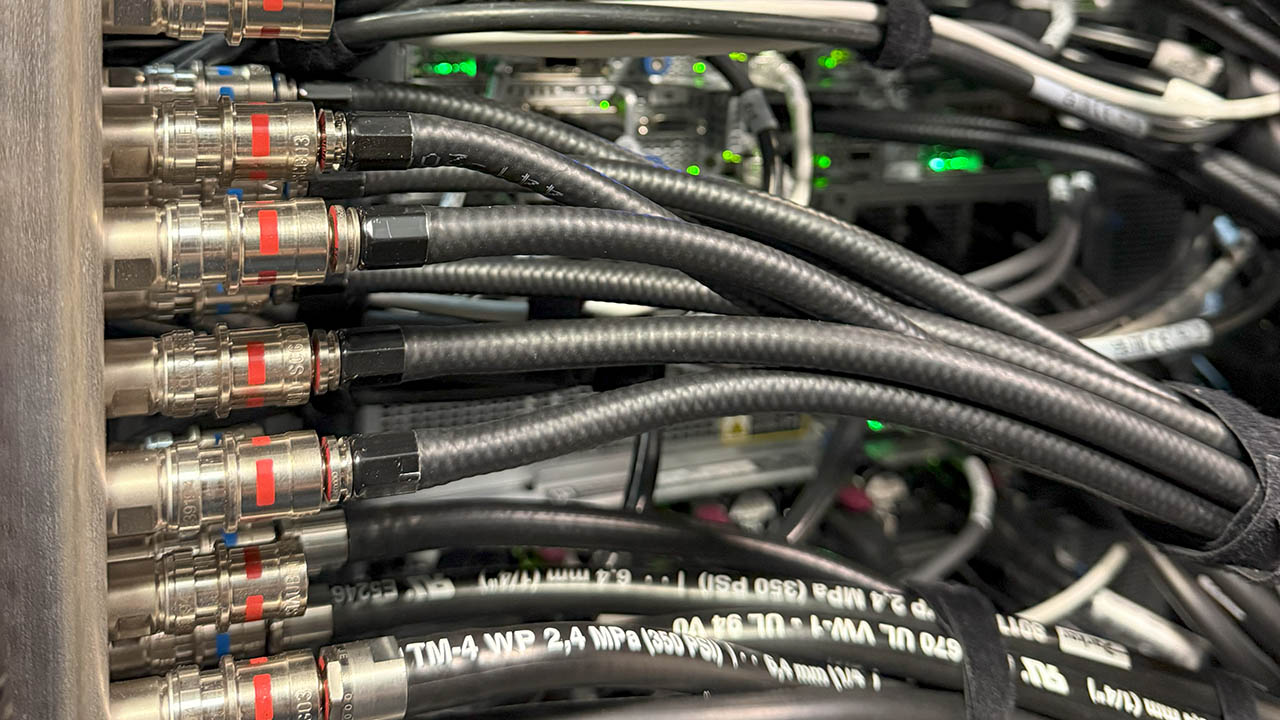

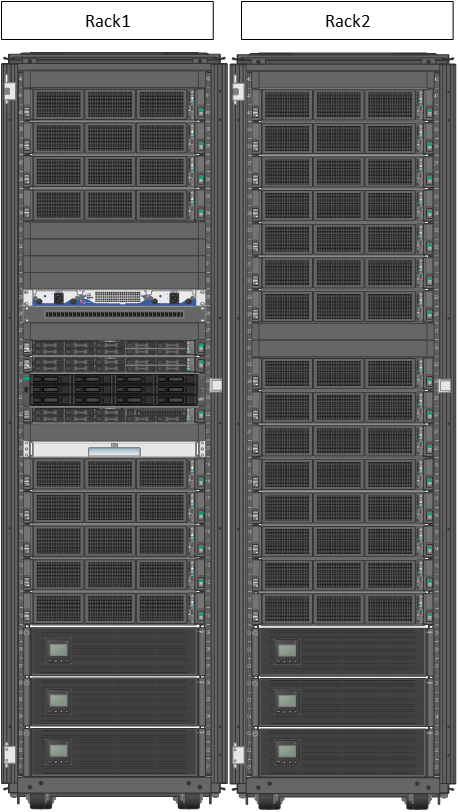

The Irgetas cluster is NU's most advanced computational facility on campus. It features high compute density and efficiency enabled by direct liquid cooling. Manufactured by Hewlett Packard Enterprise (HPE), it has the following configuration:

- 6 GPU compute nodes. Each GPU node features

- Two AMD EPYC 9654 CPUs (96 cores / 192 threads, 2.4 GHz Base)

- Four Nvidia H100 SMX5 GPUs (80 GB HBM3)

- 768 GB DDR5-4800 RAM (12-channel)

- 1.92 GB local SSD scratch storage

- Two Infiniband NDR 400 Gbps adapters (800 Gbps total)

- Rocky Linux 9.6

- 10 CPU compute nodes. Each CPU node features

- Two AMD EPYC 9684X CPUs (3D V-Cache, 96 cores / 192 threads, 2.55 GHz Base)

- 384 GB DDR5-4800 RAM (12-channel)

- 1.92 GB local SSD scratch storage

- Infiniband NDR 200 Gbps adapter

- Rocky Linux 9.6

- Interactive login node

- AMD EPYC 9684X (3D V-Cache, 96 cores / 192 threads, 2.55 GHz Base)

- 192 GB 12-channel DDR5-4800 RAM

- 7.68 GB local SSD scratch storage

- Infiniband NDR 200 Gbps adapter

- Rocky Linux 9.6

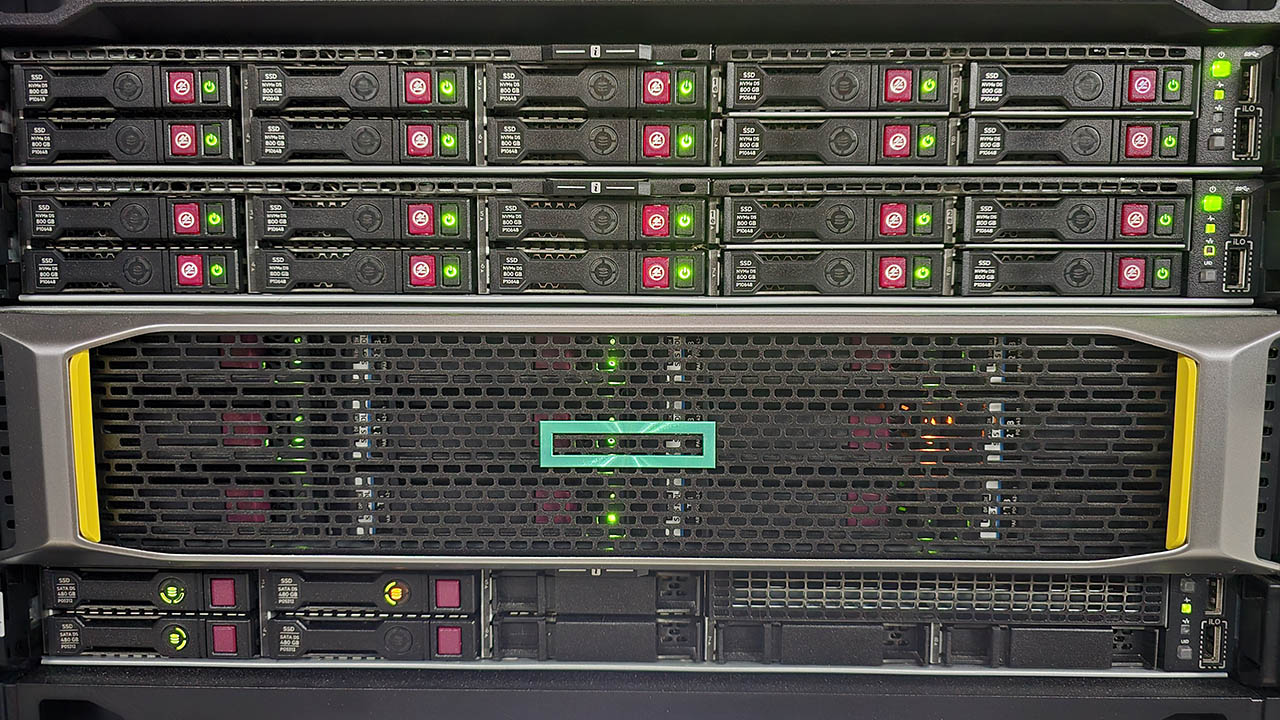

- 122 TB (raw) NVMe SSD storage server for software and user home directories

- Two AMD EPYC 9354 CPUs (32 cores / 64 threads, 3.25 GHz Base)

- 768 GB DDR5-4800 RAM (12-channel)

- Two Infiniband NDR 400 Gbps adapters (800 Gbps total)

- 80 GBps sustained sequential read speed

- 20 GBps sustained sequential write speed

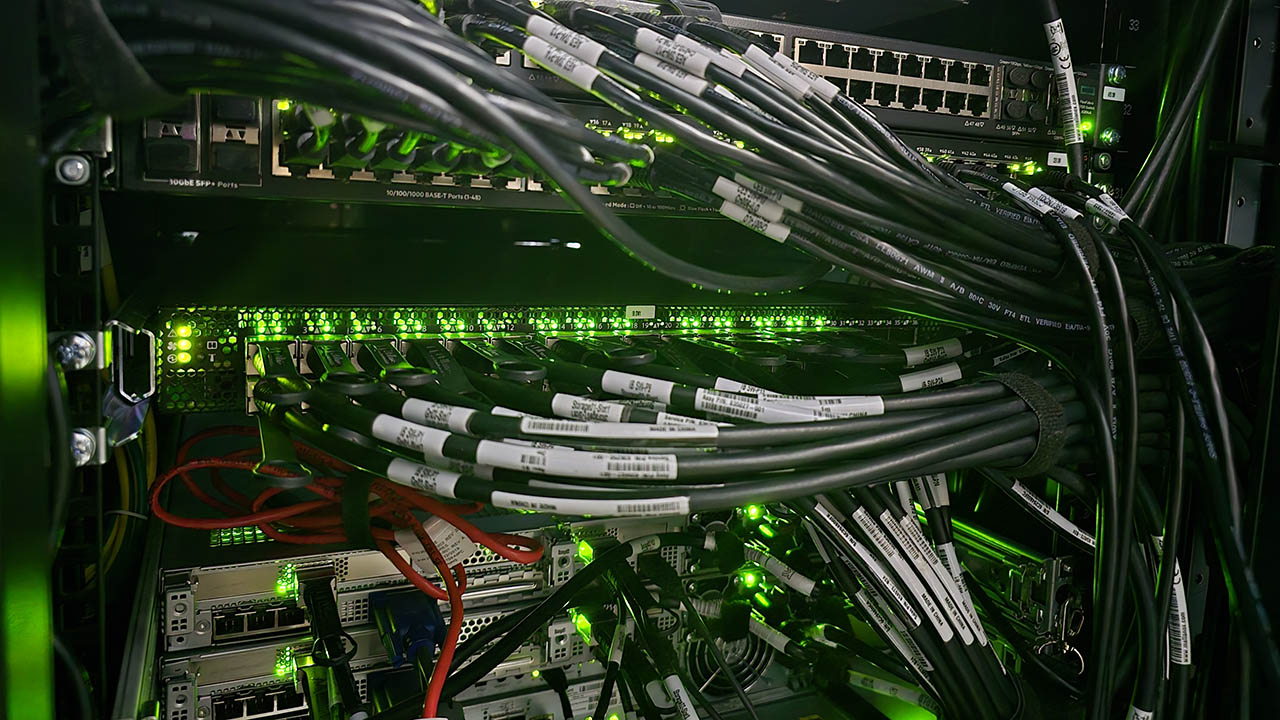

- Nvidia Infiniband NDR Quantum-2 QM9700 managed switch (compute network)

- 64 ports (400 Gbps per port)

- HPE Aruba Networking CX 8325‑48Y8C 25G SFP/SFP+/SFP28 Switch (application network)

- 48 ports (SFP28, 25 Gbps per port)

- Direct liquid cooling system

- Three-chiller setup with BlueBox ZETA Rev HE FC 3.2

The system is physically located in NU data center in Block 1.

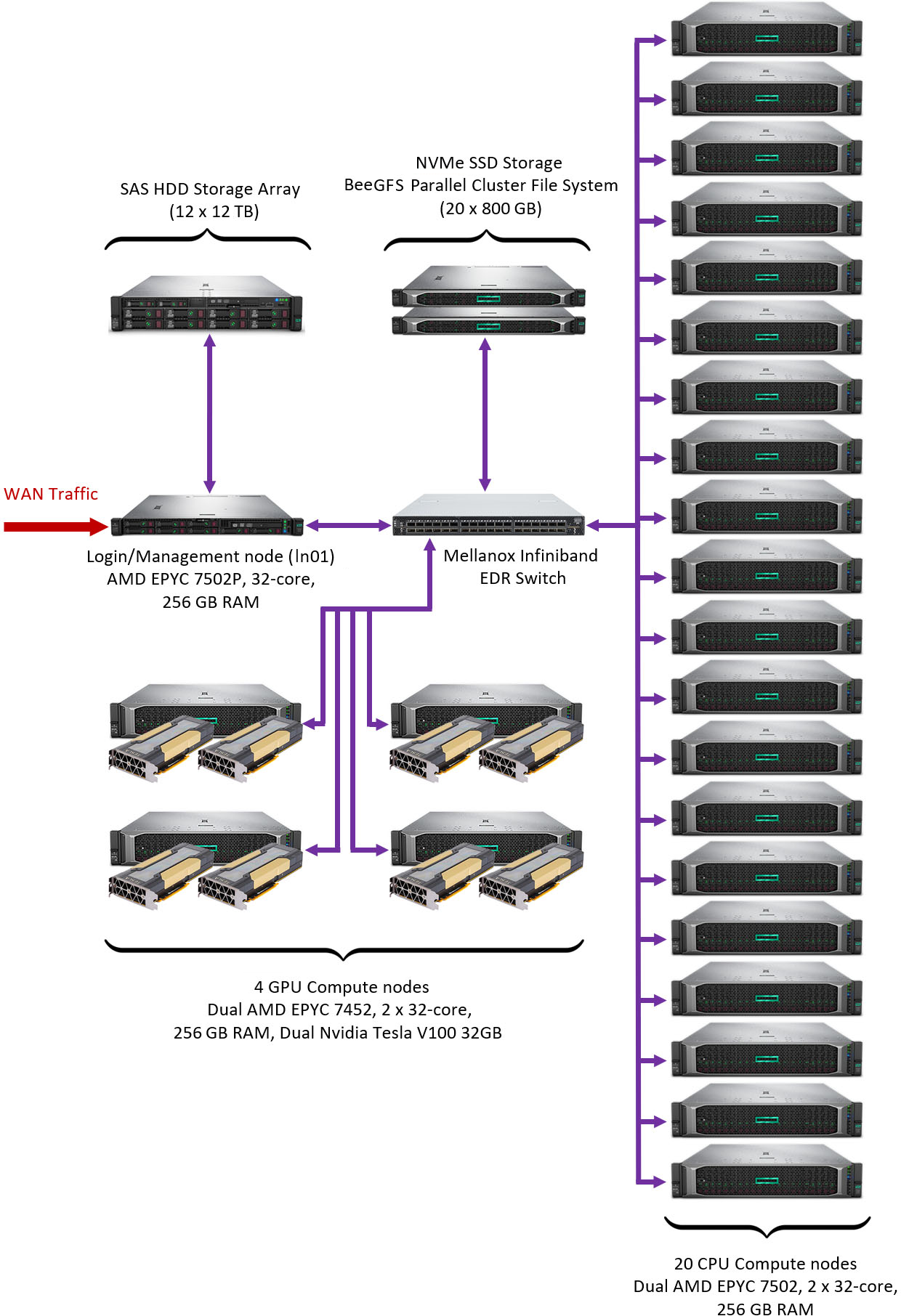

Shabyt cluster

The Shabyt cluster is manufactured by Hewlett Packard Enterprise (HPE) and deployed in 2020. For several years it served as the primary platform for performing computational tasks by NU researchers. It has the following hardware configuration:

- 20 CPU compute nodes. Each CPU node features

- Two AMD EPYC 7502 CPUs (32 cores / 64 threads, 2.5 GHz Base)

- 256 GB DDR4-2933 RAM (8-channel)

- Infiniband EDR 100 Gbps adapter

- Rocky Linux 8.10

- 4 GPU compute nodes. Each GPU node features

- Two AMD EPYC 7452 CPUs (32 cores / 64 threads, 2.3 GHz Base)

- Two Nvidia V100 GPUs (32 GB HBM2)

- 256 GB DDR4-2933 RAM (8-channel)

- Infiniband EDR 100 Gbps adapter

- Rocky Linux 8.10

- Interactive login node

- AMD EPYC 7502P CPU (32 cores / 64 threads, 2.5 GHz Base)

- 256 GB DDR4-2933 RAM (8-channel)

- Infiniband EDR 100 Gbps adapter

- Rocky Linux 8.10

- 16 TB (raw) internal NVMe SSD storage for software and user home directories (/shared)

- 144 TB (raw) HPE MSA 2050 SAS HDD Array for backups and large data storage for user groups (/zdisk)

- Mellanox Infiniband EDR v2 Managed switch (compute network)

- 36 ports (100 Gbps per port)

- Theoretical peak performance of the CPU subsystem is about 61 TFLOPS (double precision)

- Theoretical peak performance of the GPU subsystem is about 56 TFLOPS (double precision) or 896 TFLOPS (half precision)

The system is assembled in two racks and is physically located in NU data center in Block C2

Muon cluster

Muon is an older cluster used by the faculty of Physics Department. It was manufactured by HPE and first deployed in 2017. It has the following hardware configuration:

- 10 CPU compute nodes. Each CPU node features

- Intel Xeon CPU E5-2690v4 (14 cores / 28 threads, 2600 MHz Base)

- 64 GB DDR4-2400 RAM (4-channel)

- 1 Gbps Ethernet network adapter

- Rocky Linux 8.10

- Interactive login node

- Intel Xeon CPU E5-2640v4 (10 cores / 20 threads, 2400 MHz Base)

- 64 GB DDR4-2400 RAM (4-channel)

- 10 Gbps Ethernet network adapter (WAN traffic)

- 10 Gbps Ethernet network adapter (compute traffic)

- Rocky Linux 8.10

- 2.84 TB (raw) SSD storage for software and user home directories (/shared)

- 7.2 TB (raw) HDD RAID 5 storage for backups and large data storage for user group (/zdisk)

- HPE 5800 Ethernet switch (compute network)

The system is physically located in NU data center in Block 1.

Other facilities on campus

There are several other computational facilities at NU that are not managed by the NU HPC Team. Brief information about them is provided below. All inquiries regarding their use for research projects should be directed to the person responsible for each facility.

| Cluster name | Short description | Contact details |

|---|---|---|

| High-performance bioinformatics cluster "Q-Symphony" |

HPE Apollo R2600 Gen10 cluster |

Ulykbek Kairov Head of Laboratory - Leading Researcher, Laboratory of bioinformatics and systems biology, Private Institution National Laboratory Astana Email: ulykbek.kairov@nu.edu.kz |

| Computational resources for AI infrastructure at NU |

NVIDIA DGX-1 (1 unit) NVIDIA DGX-2 (2 units) DGX A100 (4 units) |

Yerbol Absalyamov Technical Project Coordinator, Institute of Smart Systems and Artificial Intelligence, Nazarbayev University Email: yerbol.absalyamov@nu.edu.kz Makat Tlebaliyev Computer Engineer, Institute of Smart Systems and Artificial Intelligence, Nazarbayev University Email: makat.tlebaliyev@nu.edu.kz |